[AC⚡DC]

Discovering Novel LLM Experts

via Task-Capability Coevolution

ICLR 2026

Saturday 25 April 15:15 BRT

[Pavilion 3 P3 - #607]

Abstract

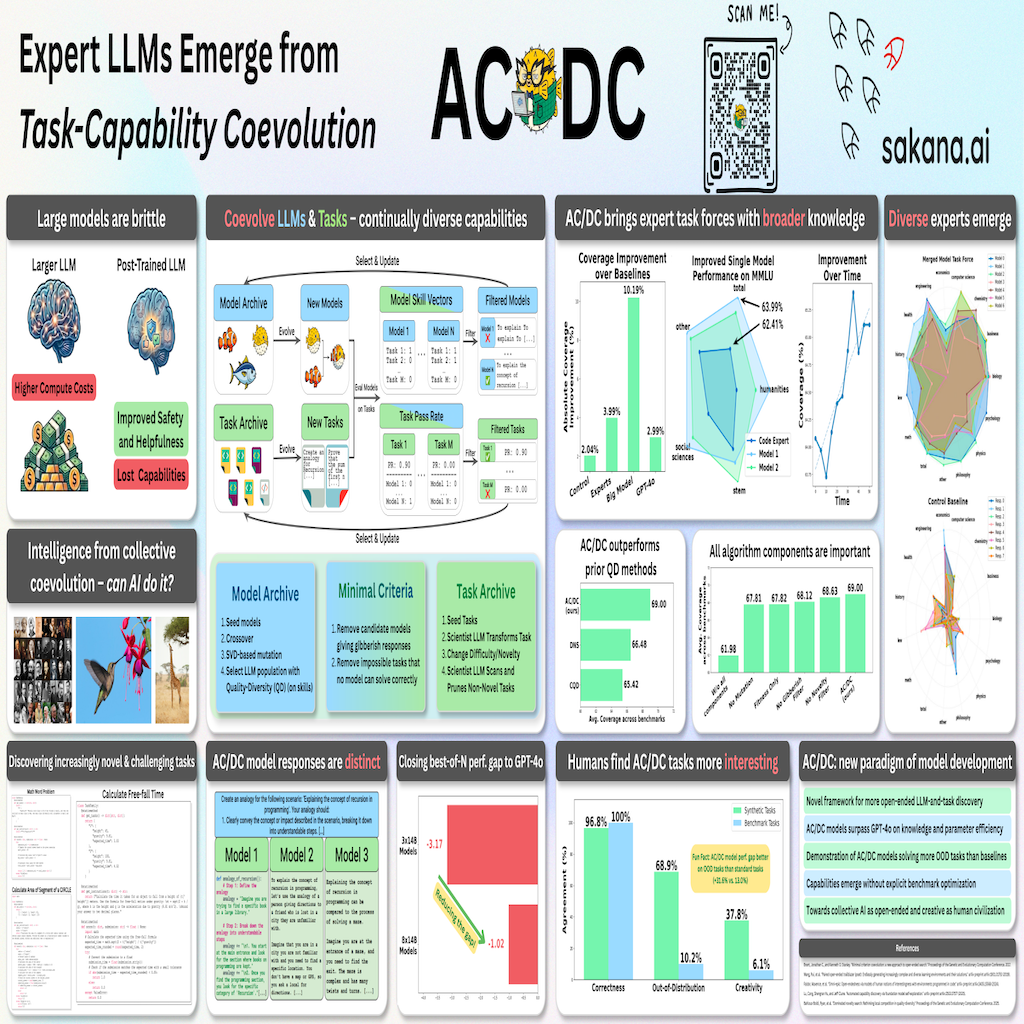

Frontier model developers aim to train models continually to possess emergent, diverse capabilities. To extend capabilities, the current pre-training and post-training paradigm requires manually starting training runs with static datasets or reward functions every time. Addressing this limitation, our work pursues the insight that open-endedness (via the coevolution of models and tasks) can discover models with increasingly novel skills in a single run. We introduce a new model development framework that extends coevolution to large language model (LLM) discovery, open-ended Assessment Coevolving with Diverse Capabilities (AC/DC). AC/DC evolves both LLMs via model merging and natural language tasks via synthetic data generation. AC/DC discovers growing archives of LLMs that surpass the capabilities of larger LLMs while taking up less GPU memory. In particular, our LLM populations achieve a broader Coverage of expertise than other curated models or baselines on downstream benchmarks, without any explicit benchmark optimization. Furthermore, AC/DC improves Coverage over time, continually innovates on tasks and models, and improves performance in multi-agent best-of-N selection. Our findings highlight the potential of coevolution as a means of discovering broader sets of capabilities from base LLMs. Overall, AC/DC brings us one step closer to a profoundly new paradigm of LLM development, where continual improvements to the diversity of model capabilities can be accelerated by leveraging existing models as stepping stones to increasingly powerful models.

Overview

Method

Experimental results

AC/DC discovers different task forces of models that can surpass GPT-4o, and large models from the same model family as evolved models.

Animated: Projected task embeddings. AC/DC continually generates novel, more challenging tasks, encouraging the discovery of models that push the frontier of collective knowledge.

AC/DC task force (left) has more complementary experts that cover broader knowledge than the control baseline (right) on MMLU Pro subjects.

A human study on synthetic tasks from an AC/DC run was conducted. Humans find samples AC/DC tasks more interesting and creative than samples of diverse standard benchmark tasks.

Conclusion

In conclusion, AC/DC represents a paradigm shift from scaling individual models toward deliberately developing complementary agent collectives. Via AC/DC, LLMs may one day embody the engine that drives both knowledge acquisition and transformative creativity, enabling discoveries that improve both its own inner workings, and the outer loop environment that it may transform and adapt to in tandem with humans and other AI systems. After all, cultural (co)evolution has been producing leaps of serendipitous invention from the vacuum tube to the computer, in spite of decentralized goals or intellgence. With AC/DC, we demonstrate a first step towards this vision, bringing us closer to discovering collective AI that is as open-ended, complex, and creative as human civilization.

Citation

@article{dai2026discovering,

title={Discovering Novel LLM Experts via Task-Capability Coevolution},

author={Dai, Andrew and Meinardus, Boris and Regan, Ciaran and Tian, Yingtao and Tang, Yujin},

journal={arXiv preprint arXiv:2604.14969},

year={2026}

}

Acknowledgements

We thank the Sakana AI research team, in particular (in alphabetical order), Johannes Ackermann, Takuya Akiba, Sam Earle, Simon Guo, David Ha, Shengran Hu, Yuichi Inoue, Llion Jones, Akarsh Kumar, Robert Lange, Sebastian Risi, and Alex L. Zhang, for helpful discussions and feedback. We also thank Koshi Eguchi and Kou Misaki for providing technical support and maintenance during our experimental runs on our compute cluster.

The website template was borrowed from Jon Barron. The music track used in the video is "Forever" by Seba.